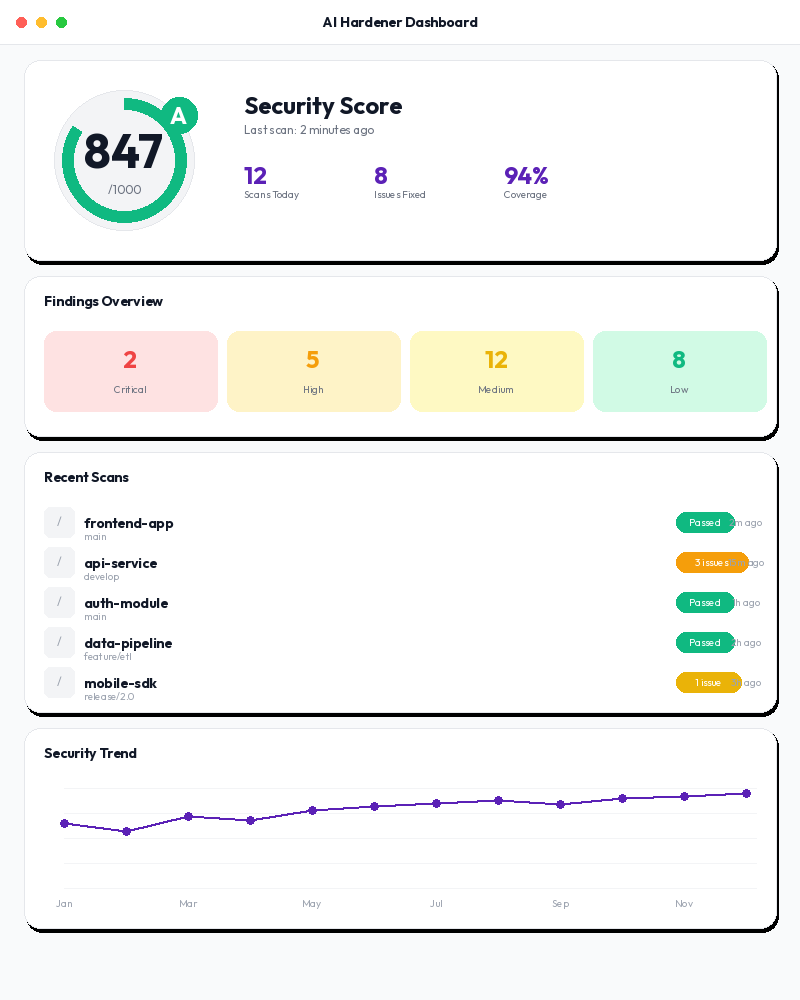

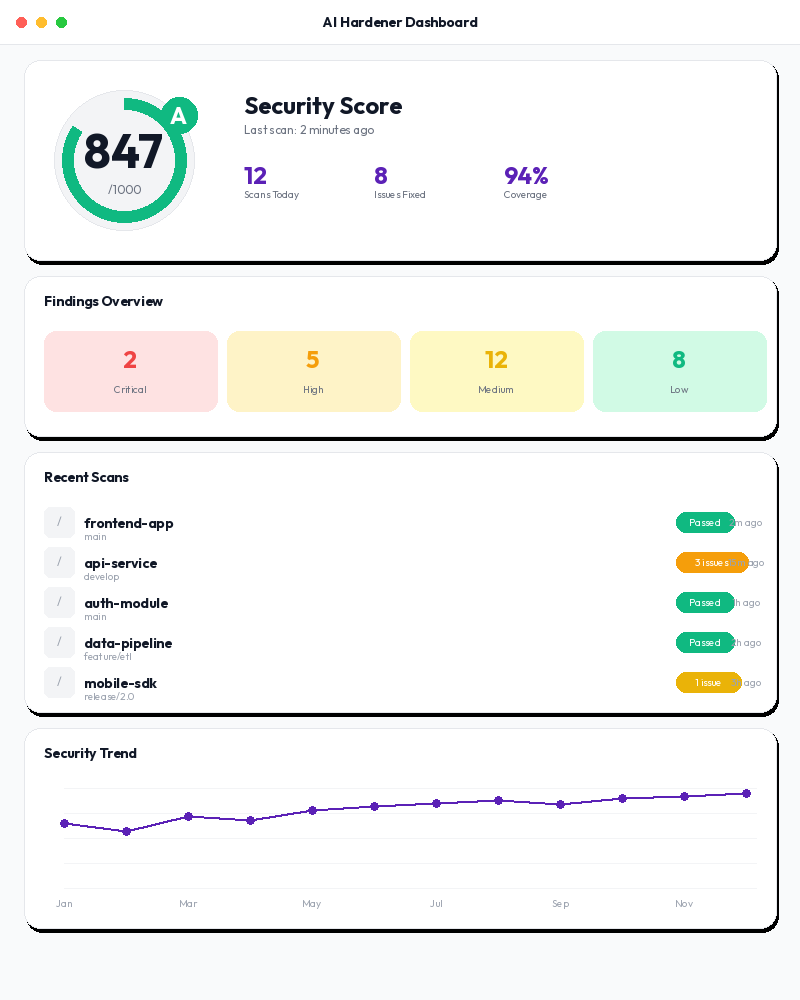

Harden Your AI

Security assurance for AI-first developers. Detect prompt injection, prevent jailbreaks, secure RAG pipelines, and test AI agents — all from a single platform.

Security assurance for AI-first developers. Detect prompt injection, prevent jailbreaks, secure RAG pipelines, and test AI agents — all from a single platform.

Powered by 10 specialized AI security scanners

Every AI-generated feature ships potential vulnerabilities. Traditional security tools don't understand prompts, LLM outputs, or agent behaviors.

Attackers manipulate your prompts to bypass safety controls and extract sensitive data from your AI systems.

Adversarial inputs bypass model guardrails, leading to harmful, unauthorized, or data-leaking outputs.

Malicious data injected into retrieval pipelines corrupts AI responses and poisons your knowledge base.

Autonomous AI agents misuse tools, escalate privileges, and take unintended actions without human oversight.

Purpose-built AI security scanners covering prompt injection, red teaming, RAG security, agent testing, compliance, and model supply chain.

LLM Guard runs 35 input/output checks. Detect injection attempts, PII leakage, toxicity, and bias in real-time.

Garak and DeepTeam run 100+ adversarial probes against your models. Test for jailbreaks, data exfiltration, and hallucination vulnerabilities.

Promptfoo evaluates 20+ vulnerability types including prompt injection, jailbreaking, PII leakage, and hallucination. TypeScript-native, OWASP Top 10 mapped.

RAG Shield detects vector poisoning, embedding attacks, cross-tenant data leaks, and context window manipulation in your retrieval systems.

Agent Probe tests for OWASP Agentic Top 10 (ASI01-ASI10): goal hijacking, tool misuse, privilege escalation, and cascading failures.

Map findings to EU AI Act, NIST AI RMF 600-1, and ISO 42001. Verify model provenance with Sigstore signatures. Generate audit-ready reports.

From connection to compliance report in under 60 seconds.

Point AI Hardener at your LLM endpoint, RAG pipeline, or AI agent. Works with OpenAI, Anthropic, local models, or any provider.

$ curl -X POST /api/v1/scans \

-d '{"target": "https://api.example.com/v1/chat",

"profile": "standard"}'

10 specialized scanners run in parallel. Quick scans finish in under 60 seconds. Comprehensive red-team scans cover 100+ attack vectors.

Plain-language findings mapped to OWASP LLM Top 10 and CWE. Actionable remediation guidance with compliance context for EU AI Act, NIST, and ISO 42001.

5 open-source community scanners + 5 proprietary scanners built for gaps no existing tool covers.

Input/output scanning (35 checks)

LLM red teaming (100+ probes)

LLM eval + red team (20+ vulns)

ML model supply chain security

Advanced multi-turn red teaming

OWASP Agentic Top 10 testing

RAG pipeline security analysis

Prompt template static analysis

EU AI Act, NIST RMF, ISO 42001

Model integrity & Sigstore

Five ways to integrate AI security into your workflow.

Ask in plain English. "Scan my LLM endpoint for prompt injection vulnerabilities."

Native integration for Claude Desktop and Cursor. 16 security tools at your fingertips.

Type /aihardener in Claude Code to scan your project for AI security vulnerabilities.

Full API access for automation. Trigger scans, retrieve findings, manage policies programmatically.

Add AI security scanning to your CI/CD pipeline. Block deploys that fail policy checks.

No credit card required. Upgrade when you need more projects and advanced features.

For individual developers exploring AI security.

For developers shipping AI features to production.

For teams building AI products with compliance requirements.

For organizations with advanced compliance and deployment needs.

"We were shipping LLM features without any security testing. AI Hardener caught prompt injection vulnerabilities in our RAG pipeline that we never would have found manually."

"The compliance mapping is a game-changer. We generate EU AI Act reports directly from scan results. What used to take our compliance team weeks now takes minutes."

"Agent Probe found that our customer service agent could be manipulated into executing unauthorized tool calls. That's the kind of vulnerability that could have been catastrophic."

Free tier includes 3 projects and 200 scans/month. No credit card required. See your first AI security findings in under 60 seconds.